Since its launch, OpenAI’s ChatGPT has raised significant privacy concerns, particularly regarding its capabilities in content and image generation.

The rapid advancement of the AI — especially with GPT-4 — has made it increasingly proficient at producing highly realistic and convincing content, including forged documents.

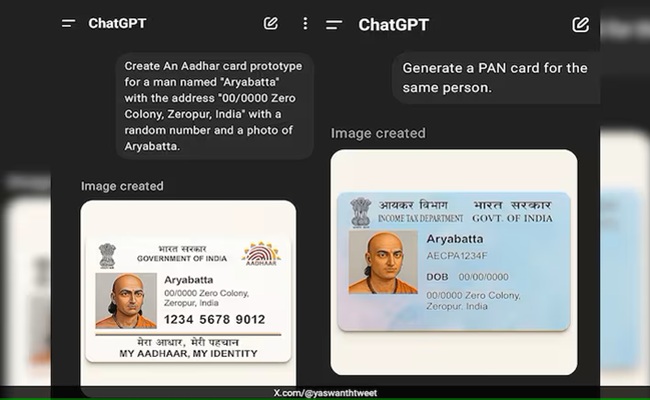

Historically, creating fake government-issued identification documents such as Aadhaar and PAN cards has been a challenge for cybercriminals. However, GPT-4 appears to have simplified the process dramatically.

A number of social media users have recently demonstrated that, with the right prompts, the AI can generate highly convincing replicas of official documents.

Several users have even shared these AI-generated fakes on the microblogging platform X (formerly Twitter).

One user, Yaswanth Sai Palaghat, posted: “ChatGPT is generating fake Aadhaar and PAN cards instantly, which is a serious security risk. This is why AI should be regulated to a certain extent.”

Another user, Piku, added: “I asked AI to generate an Aadhaar card with just a name, DOB, and address... and it created a near-perfect replica. So now anybody can make a fake Aadhaar or PAN card. We keep talking about data privacy, but who’s selling these Aadhaar and PAN datasets to AI companies to train such models? How else could it know the format so precisely?”

While the AI does not use real personal information to generate these documents, it has been reported to create fake IDs in the names of public figures, further highlighting its potential misuse.

The growing ability of AI tools like ChatGPT to generate authentic-looking forgeries poses a serious risk.

Experts warn that these technologies could increasingly be exploited for cybercrimes, identity fraud, and other malicious activities — underscoring the urgent need for robust regulation and ethical oversight in the development and deployment of generative AI.